a Trace in the Sand

by Ruth Malan

February 2018

2/20/18

February Traces

What's a Trace?

A Trace is what I called my journal -- where I write my notes as I explore what it takes to create architecture and be a great architect. You might consider it a warning about my sense of humor and delight in Life's irony and the jokes the Universe plays on us, that a person who helps shapes the field of architecture, predominantly writes in so "disorganized" and emergent a style as a journal. [grin] But it is a format that emphasizes that we are, individually and as a collective, a field, on a learning journey --- many journeys, really. Individually. And across the lot of us.

I need to carve out time to get to Part II of the Visual Architecting keynote slidedeck -- going into more of the architecture modeling and heuristics that guide architecting, and then widening the field of view, to explore what architecture means, when we take another look at what is significant in "architecturally significant decisions." In the meantime, here's Part I (it's also on the Bredemeyer site):

10/3/17

|

As a bridge from Bill Pollack's generous introduction and presentation of the award, I of course thanked Dana Bredemeyer for his contribution to the work I was being recognized for, as well as what I was about to present. Anything "Visual Architecting" is very much a "standing on the shoulders of giants" endeavor, and not just Dana's, but every architect (of any title) we have ever worked with. By way of an outline of the talk, I told the audience the sketch (above) was a fairly accurate visualization of my talk -- I would iterate on, and elaborate, build out, our notion of software architecture. And I wouldn't be complete, in that self-set mission, in a timebox (of 30 minutes, or even, if my 20+ years in software architecture is anything to go by, a lifetime). |

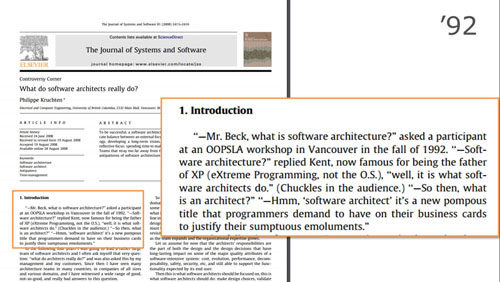

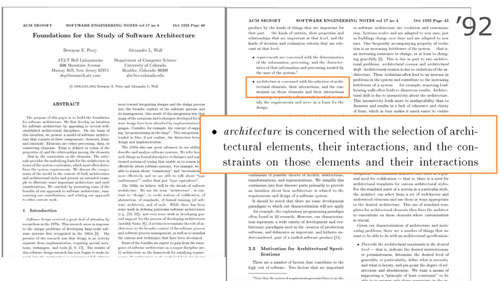

If we go back to 1992, we already see one of the narrative threads that have shaped our conceptualization of, and discourse around, software architecture, architects, and architecting:

|

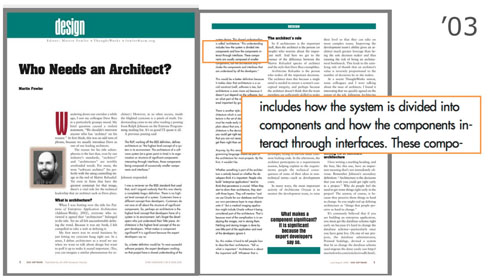

A (little over a) decade later, in 2003, we have Martin Fowler (in "Who needs an architect?") similarly perplexed, and quoting Ralph Johnson:

|

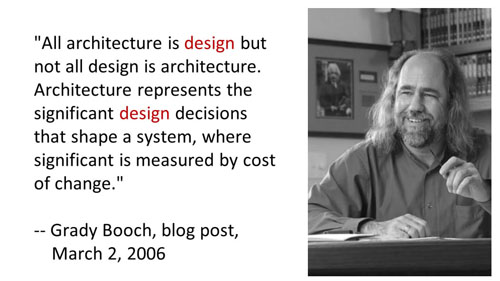

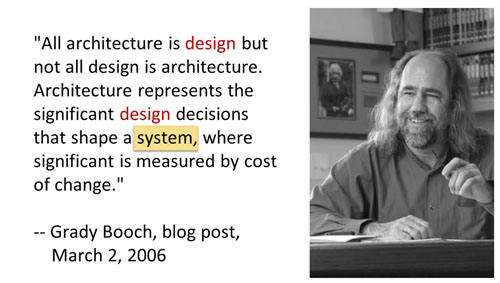

Let's sidestep the discomfort we have with someone (else?) doing "the important stuff" and getting "sumptuous emoluments," for a moment anyway, and follow the arc of software architecture to its recurrent destination, namely Grady Booch and

Decisions! |

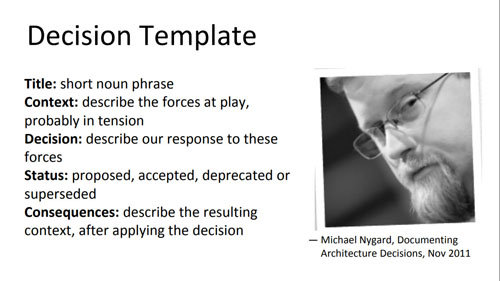

Decisions? Michael Nygard's post on Documenting Architecture Decisions presents a useful template. It has echoes of the patterns template used by the Gang of Four, and subsequent, in that it draws attention to the essential elements of Context (presenting forces and demands) and Consequences, not just the Decision itself. It's a template I and others recommend (though I like to add Alternatives Considered). Still, too much of a good thing sinks itself under its own weight, so an Architecture Decision Record leaves itself begging the question -- which decisions? |

Which decisions? Architecturally significant decisions! Which are architecturally significant? The architect decides! |

On what basis? Returning to Grady Booch: "significant is measured by cost of change" |

"Bad architecture is expensive" -- paraphrasing Brian Foote, coauthor of the must-read classic "Big Ball of Mud" |

The opening sentence to that paper observes that the de-facto standard in software architecture is <drum roll> "the big ball of mud" <badum tish> [Aside: Joe was in the SATURN audience, having presented earlier, so that was cool.] |

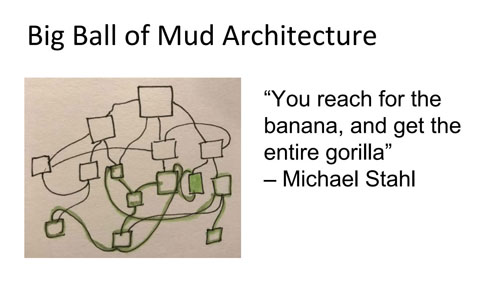

When you have a "big ball of mud," as Michael Stahl vividly put it "you reach for the banana, and get the entire gorilla." Now that sketch is an idealization... |

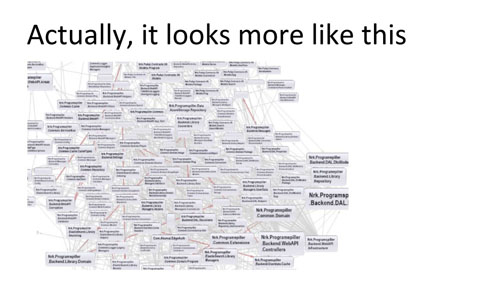

in "real life," the degree of entanglement is more like this... [above] [Image: from Undoing the harm of layers by Bjørn Bjartnes] |

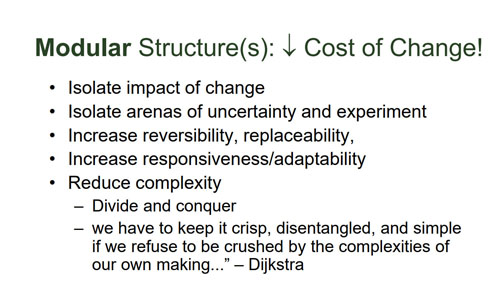

By contrast, a modular structure reduces cost of change by (and to the extent that it achieves) isolating change, shielding the rest of the system from cascading change. In a modular approach, parts of the system that are unstable, due to uncertainty and experimentation to resolve that uncertainty, can be shielded from other, better understood and more stable parts of the system. Parts can be plugged in, but removed if they don't work out, making for reversibility of decisions that don't pan out. They can be replaced with new or alternative parts, with minimal effect on other parts of the system, enabling responsiveness to emerging requirements or adaptation to different contexts. Further, it's a mechanism to cope with, and hence harness, complexity. Partitioning the system, reduces how much complexity must be dealt with at once, allowing focus within the parts with reduced demand to attend (within the part) to complexity elsewhere in the system. We give a powerful programmatic affordance a handle with minimal understanding to invoke it, and can selectively ignore (not all the time, but for a time), or may never even need to know (it depends), its internals. |

So modularity and parts, or components, is key to managing change and associated costs. Returning to Martin Fowler's paper, indeed software architecture is acknowledged to include how the system is divided into components, and how they interact. |

Going back to 1992, when Kent Beck was giving the architecture-architects thing a ¯\_(ツ)_/¯, Perry and Wolf were defining (software) architecture as being concerned with "the selection of architectural elements, their interactions, and the constraints on those elements and their interactions." [Hopefully those who are excited about Alicia Juarrero's work on constraints (1999), are tingly at the foreshadowing in Perry and Wolf's characterization (1992).] |

And, indeed, the contemporary go-to reference definition for software architecture (that being the definition in wikipedia which, in this case, derives from that of the Clements et al team from the SEI, which dates back to the mid-90's), is in terms of the high level structures of the system, where those structures comprise software elements and the relations between them. |

We're holding these two ideas about architecture in creative suspension -- architecture is decisions about "the important stuff" where important is distinguished by cost of change, and architecture is about the structure of the system, which has something to do with (lowering) the cost of change. Now we're going to take another pass at our conception of architecture (where how we conceive of architecture is being used in its double sense), and this quote (above) is my permission slip. |

Architecture is design. Not all design, but importantly, architecture is design. |

What is design? In The Sciences of the Artificial," Herbert Simon ("ground zero in design discourse" -- Jabe Bloom) notes "Everyone designs who devises courses of action aimed at changing existing situations into preferred ones." This characterization is profound, for all its straightforward simplicity. |

Design is what we do, when we want to get more of what we want than we'd get by "just doing it." |

Now, if architecture is design, and architecture is, or at least includes, the shaping structure, the elements and relations -- the boxes and lines -- and design is about creating a better structure, how do we do that? |

As one does, in contemplating life's profound questions, we might turn to the Tao. And in this case, the story of the dextrous butcher. In one translation, the master cook tells us:

Where natural features like rivers and mountains oblige, states have boundaries along them, but software doesn't have natural topology. Or does it? |

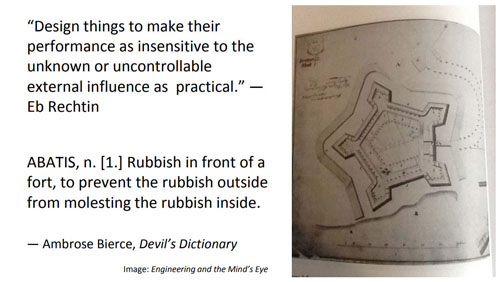

The system boundary is an obvious candidate. In the founding classic of system architecture, Eberhardt Rechtin presents heuristics gleaned from his amazing career as a system architect in aerospace, and master teacher of system architects in the seminal program he created at USC. One of these heuristics (is a "turtles all the way down" sort of thing, but applies also at the system level):

That, for me anyway, has echoes of fort design and Ambrose Bierce' Devil's Dictionary definition of abatis:

|

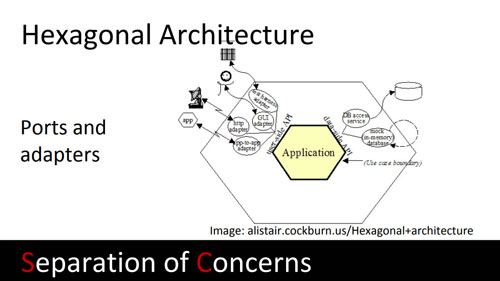

Which visually, and in intent, has echoes of Alistair Cockburn's hexagonal architecture (pattern). Here, adapters at the system boundary, shield the (core) application (code) from interactions with the outside, keeping business logic uncontaminated by the peculiarities of external agents or systems and their states, interactions and interaction paradigms, and keeping business logic from leaching into user, or other system, interface code. Moreover, ports and adapters are a first mechanism of address for cost of change, partitioning the system boundary into plug-and-play access points. |

But what about the boundaries within the boundary? These abstractions we use to give our system internal form, must, as Michael Feathers points out, be invented. They are conceits. In every sense of the word, perhaps. At least, when asked about the granularity of microservices, I point to the WELC master going "about so big." Obviously a joke, the joke being on us, the right answer of course being "the right size, and no bigger." Which is not an answer, but where can we look, for a better answer? |

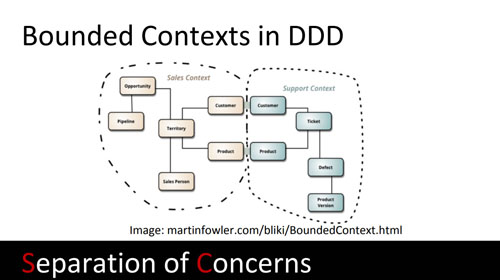

One place to go, in looking for the natural topology, to find the natural shape to follow in creating internal system boundaries, is the "problem" domain -- that is, the domain(s) being served by the system(s) we're evolve-building. And indeed, we're broadly using bounded contexts in Domain-Driven Design to suggest service boundaries. And when we get to the tricky parts, like "customer" or "product" that have potentially overlapping, yet different, meanings in different domains, we slow down, and move more carefully. A customer, after all, experiences themselves to be one person through all touchpoints with "the system," and doesn't want to feel like the system cleaved them brutalistically. So we separate domains, but the architect is noting this point of articulation, this tricky part, where we have to move more carefully. |

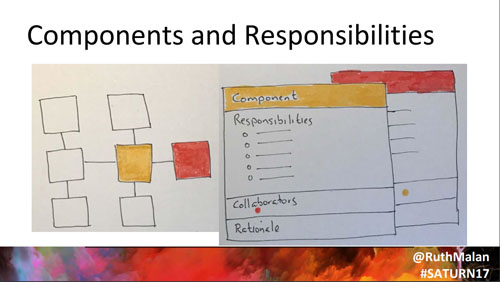

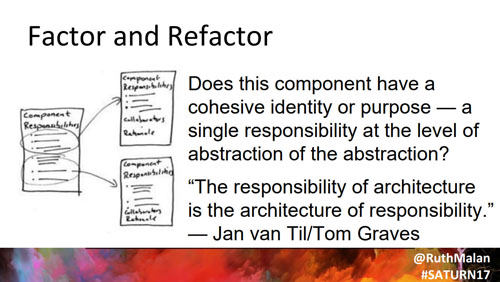

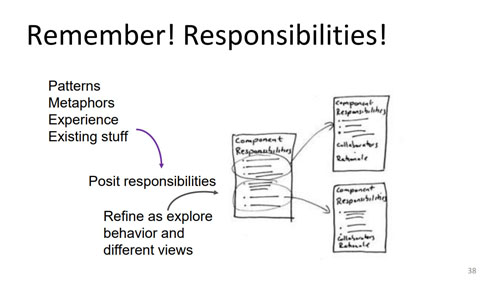

Another place to go is back to 1989 and Ward Cunningham and Kent Beck's CRC cards, but repurposed for components. We work with components and responsibilities in both directions -- start with a first cut notion of components the system will need, and identify and allocate responsibilities to them; or start with responsibilities evident from what the system needs to do, and factor to find components. Continue to update the responsibilities for each component, as more is learned as the system is explored and built out. |

These lists of responsibilities are a powerful and largely overlooked/underused tool in the architect's toolbelt. If the responsibilities don't cohere within an overarching responsibility, or purpose, that should trip the architect's boundary bleed detectors. We may need to refactor responsibilities, and discover new components in the process. We think that "naming things" is the big problem of software development, but "places to put things" is the proximate corollary. Leading to a neat contragram:

We forgive a line for what it doesn't capture, when it does capture so much of the very essence of a thing! |

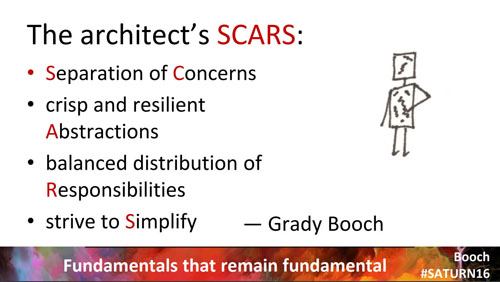

Grady Booch notes that, for all that changes, there are "fundamentals that remain fundamental." I took the liberty of rearranging Grady's fundamentals (identified in his keynote at SATURN 2016 and elsewhere), to create a mnemonic handle: SCARS*. You know, what we get from experience. Separation of concerns plays a role widely and variously -- we've already talked about separating interactions at the system boundary from the application core, looking to bounded contexts (domains of concern) to suggest abstractions, and separating responsibilities to create crisp and resilient abstractions. Resilience can be tested by doing thought experiments with anticipated changes -- assessing the impact against the responsibilities of current components, to see how well we're doing in isolating change. And as the system evolves, we can visualize and track changes to see how well our abstractions hold up. Having identified component responsibilities comes in handy in assessing balance as well as cohesion. It is readily evident if we're conceiving of a "god" component, or even demigods, with aspirations. As the story goes, to Thoreau's "simplify, simplify," Ralph Waldo Emerson retorted "One "simplify" would have sufficed." Which nicely indicates a key strategy for simplifying -- do less. |

Returning to Dijkstra: "we know we have to keep it crisp, disentangled, and simple if we refuse to be crushed by the complexities of our own making." Having (named!) places to put things, is a nice idea. But code is a matter of mind -- many minds even. Entropy is hard to work against, especially when the effects are hard to notice (until it is hard not to notice). |

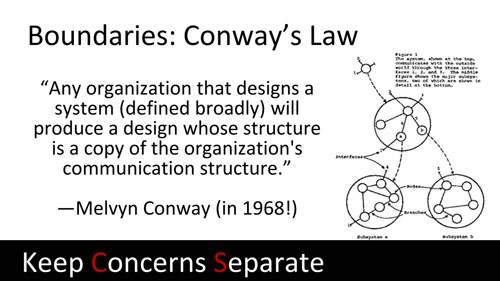

Conway's Law asserts that the (communication) structure of the organization, and in particular the communication paths it facilitates and inhibits, are powerful, even determining, shapers of the system. I paraphrase it thusly: "if the architecture of the system and the architecture of the organization are at odds, the architecture of the organization wins." Recognizing that's a law, like gravity, then, we can play along with it, and use team boundaries to maintain component or microservice boundaries, by lining them up. But. There is a but. We do have to remain vigilant, lest we get played by the very law we're using to game entropy. The forces we're using to protect and reinforce boundaries, increase inertial forces at the boundary -- it's harder to see when change is needed, and harder to change. Moreover, given what the communication paths foster and inhibit, we're going to have to put in explicit work, to ensure that we don't get scope creep within the component (microservice, etc.), and to manage duplication (with concomitant inconsistency) across components. Short-cuts within teams to avoid co-ordination overhead between teams (trying to create sync points in schedules, negotiate differing priorities, etc.), become erosion of cohesion and accommodations that "smudge" architectural intent. Slippery slope to a growing constellation of balls of mud, unless work is put in, not just to maintain order, but to create new order as context and understanding shifts and evolves, and raises new needs, challenges and forces that must be addressed within the system -- the organization, our mental models, and the code. |

So far we've been talking about boundaries, and designing crisp and resilient abstractions and keeping them that way. But if we swing by Grady Booch's characterization of software architecture once more, we might notice that while system hasn't been absent in what we've covered so far, it also hasn't been the focus. |

Russell Ackoff is one of the pioneers of systems thinking and design. In 1974 he was writing papers with titles like "Systems, Messes, and Interactive Planning" -- how very 2017! In this roughly 10 minute (starting at 1:12) talk, Russ Ackoff covers and illustrates the key characteristics of systems. Notably,

|

Returning to Eb Rechtin:

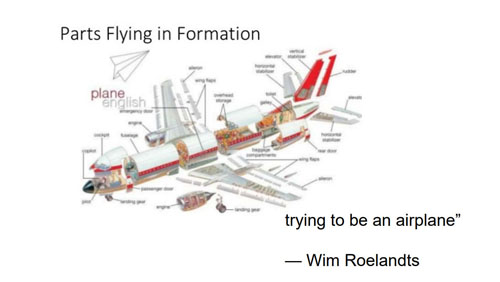

Without interrelationships, we have, as Wim Roelandts put it (back when we were working at HP): "parts flying in formation, trying to be an airplane." Obvious? Surely. Yet we need to act on this understanding. It is not enough to decompose a system into components or microservices or whatever the chunking du jour, minimizing interdependence, and proceed as if coherent systems will gracefully emerge from independent "two pizza" teams. |

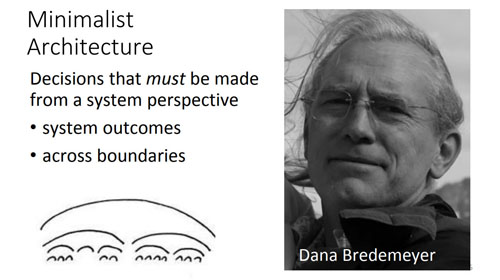

So we arrive at another characterization of architecture, one that we owe to Dana Bredemeyer: architecture decisions are those that must be made from a system perspective to achieve system outcomes (capabilities and properties of the system, as well as stakeholder outcomes and value in the ecosystem). A minimalist architecture uses this heuristic to filter for significance. If a decision can be made at local scope (within a microservice, say) without negatively impacting system outcomes, it is made there. Architecture decisions, on the other hand, are those that need to be made across boundaries (the system boundary, or across boundaries within the system), in order to get more the system properties we want, and to build desired system capabilities. They are decisions that enable us to build more than just an aggregation of parts. We're addressing fit among the parts, and their interaction, to yield a coherent system. And addressing, as we do that, the complexity we've moved to interactions, when we shield and focus attention within the parts, to increase organizational and cognitive tractability. |

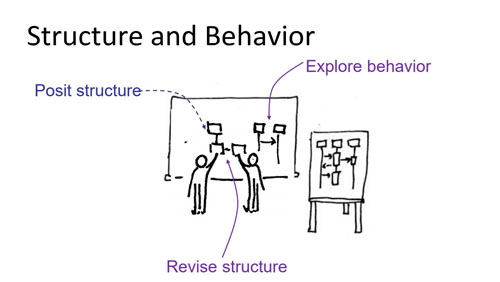

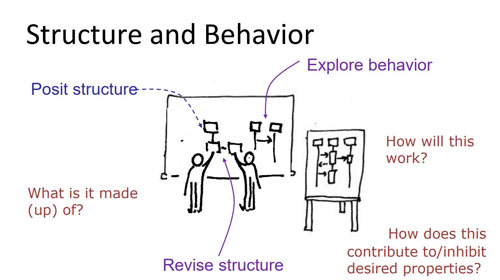

The system takes a different kind of attention: we're reasoning about the interaction among architectural elements to yield capabilities (and related value to stakeholders) with desired properties (impacting user-, operations-, and developer-experience, and impacting system integrity and sustainability), addressing the inherent challenges and tradeoffs that arise as we do so. Which means that we're exploring behavior, to direct discovery as we're exploring how to build the system, not just its parts. But this impacts how we conceive of and design the parts. |

So we're positing structure, and asking "what is this system made of?," but also -- and soon -- exploring behavior, asking "how will this work?" and "how does this approach contribute to or inhibit the desired system properties and yield needed system behaviors?" |

As we do this, we're going to learn more about the responsibilities of the components or architectural elements. And we need to be disciplined about updating the responsibilities and refactoring components/responsibilities to try out alternative structurings, or to improve the current one. |

|

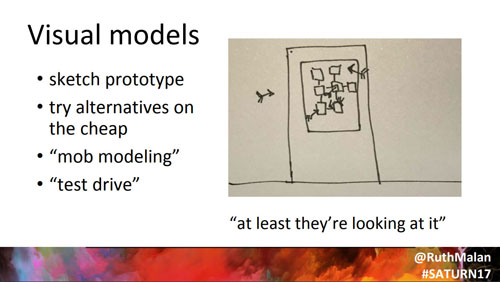

Design of complex systems is hard -- wickedly hard! And wicked problems take all the cognitive assist we can muster. Tradeoffs must be made because there is interaction -- not just interaction among components to create a capability, but interaction among properties. We draw diagrams or model (some aspect of the system) to think, alone, and to create a shared thoughtspace where we can think together (and across time) about the form and shape and flow of things, considering how-it-works both before we have code and when the very muchness of the code obfuscates and it is all too much to hold in our head, yet we need to think, explore, reason about interactions, cross-cutting concerns, how things work together, and such. [That long sentence reifies how soon too much becomes cognitively intractable.] Models help us test our ideas -- in an exploratory way when they are just sketches, and thought experiments, where we "animate" the models in mind and in conversation. Drawing views of our system, helps us notice the relationships between structure and function, to reason about relationships that give rise to and boost or damp properties. We pan across views, or out to wider frames, taking in more of the system and what it does where and how and why (again, because we must make tradeoffs we need to weigh value/import and risk/consequences and side effects). We sketch-prototype alternatives to try them out in the cheapest medium that fits what we're trying to understand and improve. We seek to probe, to learn, to verify the efficacy of the abstractions we're considering, under multiple simultaneous demands. We evolve the design. We factor and refactor; we reify and elaborate. We test and evolve. We make trade-offs and judgment calls. We bring what we can to bear, to best enable our judgment, given the concerns we're dealing with. Software is a highly cognitive substance with which to build systems on which people and organizations depend. So. We design-test our way, with different media and mediums to support, enhance, stress and reveal flaws in our thinking. Along the way -- early, and more as fits the moment -- we're "model storming" "out loud" "in pairs" or small groups. And all that agile stuff. Just in the cheapest medium for the moment, because we need to explore options quick and dirty. Hack them -- with sketches/models, with mockups, with just-enough prototypes. We use diagrams and models to see what we can't, or what is hard to see and reason about, with (just) code. Enough early. And enough along the way so that we course correct when we need to. So that we anticipate enough. Put in infrastructure in good time. Avoid getting coupled to assumptions that cement the project into expectations that are honed for too narrow a customer need/group. And suchness. Years ago I saw a cartoon where there's an architecture diagram on a door, and everyone is throwing darts at it, and the caption read "at least they're looking at it." I couldn't find the original, so unfortunately I can't give proper attribution and had to redraw it, or at least the gist I remember. |

|

Architecture decisions entail tradeoffs. We try for "and" type solutions, that give us more of more. More of the outcomes we seek, across multiple outcomes. Still, there are tradeoffs -- "[architecting] strives for fit, balance and compromise among the tensions of client needs and resources, technology, and multiple stakeholder interests" (Rechtin and Maier). Compromises mean not everyone is getting what they want (for themselves, or the stakeholders they see themselves beholden to serving) to the extent they want. Seeing the options and good ideas to resolve (only) the forces from the perspective of (just) a part, may.... lead to questioning design decisions ("throwing darts", in the terms of the cartoon) made from the perspective of the system. It can be hard to see why anyone would give up a good thing at a local level to benefit another part of the system, or to avoid precluding a future strategic option or direction. Sometimes those questions lead to a better resolution. Sometimes they just mean compromise. Further, complex systems are, well, complex. Many, many! parts, in motion. In dynamic, and changing, contexts (of use, operations, evolutionary development). So there's uncertainty. And ambiguity. And lots of room for imperfect understanding. And mistakes. Will be made. Wrong turns taken. Reversed. Recovered from. We're fallible. So. More darts. Which is humbling -- more so, if we're not humble enough to stay fleet, to try to learn earlier and respond to what we're learning. All of which means we need to notice what is hard to notice from inside the tunnel of our own vision -- where what we're paying attention to, shapes what we perceive and pay attention to. |

"A change of perspective is worth 80 IQ* points" reminds us to take a different vantage point, to see from a different perspective. Consider the system from different points of view; use the lens of various views. This can play out multiple ways, but includes considering the design (structure, dynamics and implications) from the perspective of security, and from the perspective of responsiveness to load spikes, etc. Another way to get a change of perspective, is to get another person's perspective. Invite peers working on other systems, say, to share what's been learned and seek out opportunities and weaknesses, things we missed. Our team can miss the gorilla, so to speak, when our attention is focused on the design issues of the moment. Fresh perspective, and even just naive questions about what the design means, can nudge an assumption or weakness into view. And merely telling the story, unfolding the narative arc of the architecture to fit this person or audience, then that, gets us to adopt more their point of reference, across more perspectives -- in anticipation, and when we listen, really listen, to their response and questions. We need to adopt the discipline of not just accepting our initial understanding, but rather seeking different understandings. We investigate alternative views, to probe further, to better understand the system and its likely and possible stresses and strains. Doing so, gives us design options, and fall-backs. These are the significant design decisions, decisions about the important stuff, after all. We can understand the system as code, and building up mental models of the structure and "how it works." And we can understand the system (as we envision it, and as we build-evolve it) through sketches or visual models with reasoned arguments, explaining, exploring and defending the design. And we can understand the system through visualization of the system behavior, or through simulation or instrumentation (of prototypes, earlier, and the system in production, soon and then later, as the system is evolved). We can understand the system as perceived by someone new to the system, and as someone comfortable with the mental models we've imbued it with. We can understand the system directly and through the lens of an analogy (or hybrid blend of analogies). Understand it as loss and as gain. As a system we break down into parts, and as a system, or whole. |

|

* Instead of IQ points (which has an ableist cast, and have been used to discriminate unfairly, for example, across cultural backgrounds), it would be better to use something like pages from Nick Sousanis' Unflattening.

."The art of being wise is the art of knowing what to overlook." —William James

|

|

I also write at:

- Bredemeyer Resources for Architects

Software Architecture Workshop